March 2026 · ForensicMark Blog

EU AI Act Article 50: watermarking compliance guide

Article 50 of the EU AI Act requires AI-generated images, audio, and video to carry machine-readable markings. Enforcement begins August 2026. Here's what you need to do.

What Article 50 requires

Article 50 of the EU AI Act (Regulation (EU) 2024/1689) establishes transparency obligations for providers of AI systems that generate synthetic content. The key requirement for images: AI-generated images must be marked in a machine-readable format that discloses their AI origin. The Regulation explicitly names two technical approaches that satisfy this requirement — invisible watermarks and C2PA content credentials.

The obligation applies at the point of generation. If your system generates an image using an AI model, that image must carry the marking before it is delivered to users or published. Retroactive marking after distribution does not satisfy the requirement.

Timeline and enforcement date

The EU AI Act entered into force on 1 August 2024. Article 50's transparency obligations for general-purpose AI and synthetic content apply from 2 August 2026 — giving providers two years from the date of entry into force to implement compliant systems. As of March 2026, you have approximately five months to reach compliance.

It is worth noting that some provisions of the Act apply earlier. Prohibited AI practices have been banned since February 2025, and governance and GPAI model rules apply from August 2025. Article 50 is among the provisions taking effect in the final August 2026 wave.

Who is affected

The obligation applies to providers and deployers of AI systems that generate or manipulate image, audio, or video content. This includes:

- Companies building AI image generation products (text-to-image, image editing, inpainting)

- Platforms that allow users to generate AI images and publish them

- Any organization generating AI-produced marketing, editorial, or social media images for EU audiences

- Non-EU companies if their systems serve EU users — the Act has broad extraterritorial reach, similar to GDPR

There is a limited exception for content that is clearly artistic, satirical, or fictional in context, where the AI nature is "evident from the context." In practice, most commercial use cases cannot rely on this exception and should implement technical markings.

The two compliant methods: invisible watermarks and C2PA

The Regulation explicitly references both invisible watermarks and C2PA content credentials as satisfying the machine-readable marking requirement. This is significant: the EU legislator reviewed the available technical standards and named these two specifically.

Invisible watermarks embed a payload directly into image pixels that signals AI origin. The watermark survives typical transformations — JPEG compression, cropping, screenshots, social media re-encoding — making it detectable even after the image has been shared and re-shared. For images that circulate on social media, invisible watermarks are the only method that reliably persists.

C2PA content credentials attach a cryptographically signed manifest to the file that records the AI system used, the date of generation, and the organization responsible. This provides verifiable, tamper-evident provenance when the manifest is present. However, C2PA manifests are stored in file metadata and are stripped by screenshots and most social media platforms.

Why you need both, not just one

Each method has a complementary weakness and strength. C2PA provides cryptographic proof and a full audit trail — but it is stripped when metadata is removed. Invisible watermarks are robust to stripping — but they carry only a compact payload (typically 48–256 bits), not a full signed certificate chain.

The defensible compliance posture is to embed both at generation time. When the file is shared intact, the C2PA manifest provides the full verifiable credential. When the file is screenshotted or re-encoded, the invisible watermark persists as the residual AI-origin signal. A regulator or automated detection system can use whichever layer survives.

Penalties for non-compliance

Violations of Article 50's transparency obligations are subject to administrative fines of up to €15 million or 3% of total worldwide annual turnover, whichever is higher. For large AI providers, 3% of global turnover represents a significant exposure. Enforcement will be handled by national market surveillance authorities in each EU member state, with coordination through the European AI Office.

Beyond financial penalties, non-compliance exposes companies to reputational risk in an environment where AI disclosure has become a consumer trust issue. Proactive compliance is a credibility signal, not just a legal obligation.

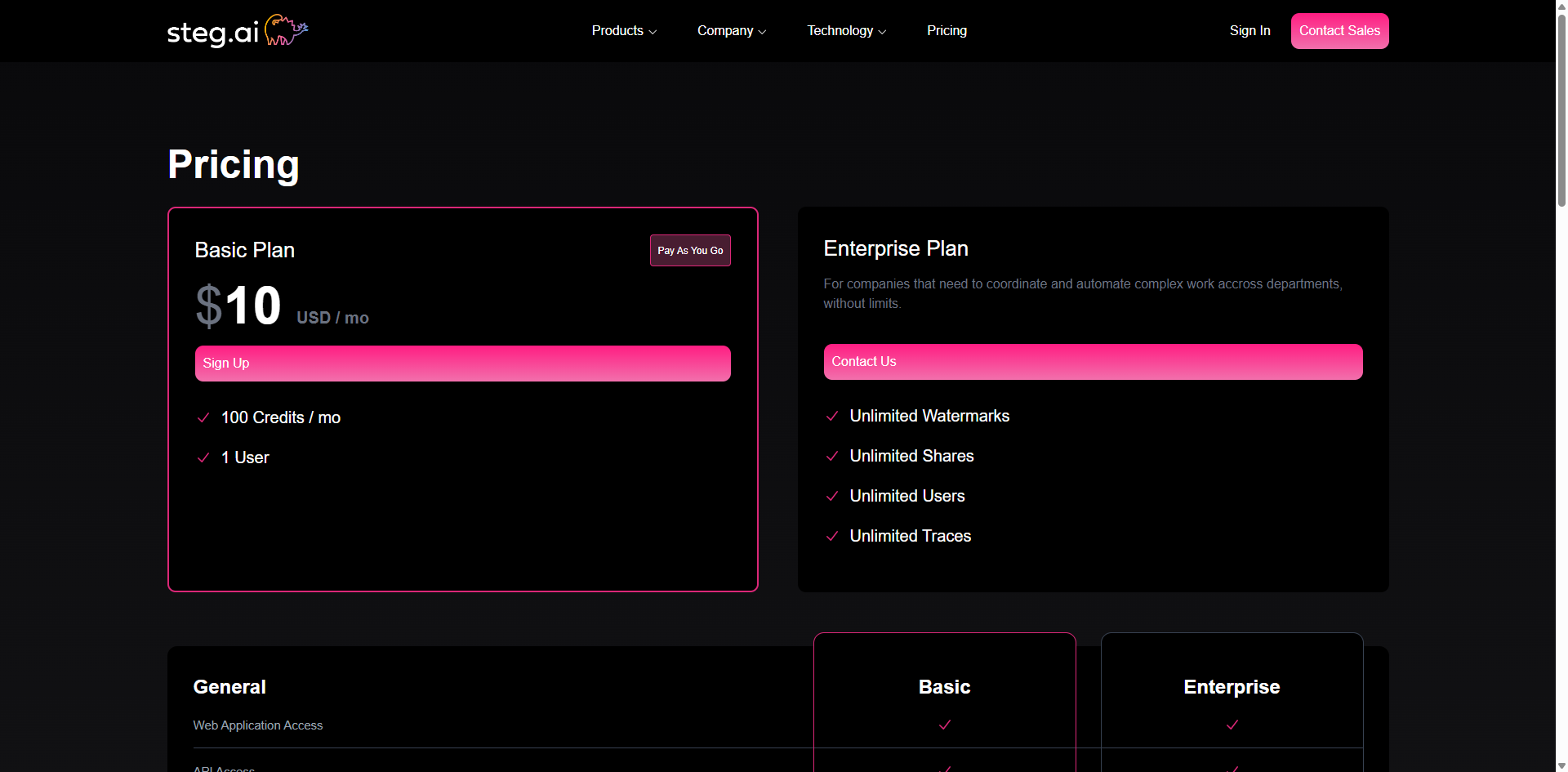

How other tools address EU AI Act compliance

Several vendors market EU AI Act compliance features. The screenshots below show how competing products position their watermarking and provenance offerings relative to Article 50.

Common themes across these approaches: invisible watermarks for robustness, C2PA for verifiability, and at-generation embedding. Where ForensicMark differs is in combining both layers in a single API call with no model fine-tuning required.

How ForensicMark satisfies Article 50

ForensicMark's API embeds an invisible neural watermark and attaches a C2PA manifest in a single API call. The watermark payload encodes a machine-readable AI-origin flag along with a payload you define (system ID, model version, timestamp hash). The C2PA manifest records the generation event with a signed certificate. Both layers are written at generation time, before the image leaves your system — satisfying the at-generation requirement of Article 50.

Detection is available via the ForensicMark detection tool and API — allowing you, regulators, or automated systems to verify the AI-origin marking even after the image has circulated online.